About the Project

LEGIMaC (Low-Energy Gamma Imaging via Machine Learning in Calorimeters) is a PNRR-funded project that developed neural network-based algorithms for waveform analysis in SiPM-coupled scintillator calorimeters. By training 1D convolutional neural networks to distinguish scintillation events from dark noise and to resolve temporal pile-up, the project pushed detection thresholds to energies previously inaccessible, and demonstrated real-time FPGA deployment via HLS4ML at power levels compatible with space missions.

Introduction: The Low-Energy Frontier of Gamma Detection

Gamma-ray calorimeters based on inorganic scintillators coupled to Silicon Photomultipliers (SiPMs) represent the state of the art for space-based high-energy astrophysics instruments. SiPMs offer high quantum efficiency, mechanical robustness, insensitivity to magnetic fields, and the ability to operate over a wide temperature range — all critical properties for orbital detectors. Yet SiPMs carry an intrinsic limitation that becomes particularly severe at low energies: their dark count rate. Each SiPM cell fires spontaneously at rates of tens of kilohertz to megahertz depending on temperature, generating single-photon pulses that are, individually, indistinguishable from a genuine photon produced by a scintillation event.

When the energy deposited by a gamma photon is low enough that the corresponding scintillation light produces only a handful of detected photons, the dark noise floor begins to overlap with the signal region of the energy spectrum. Traditional trigger systems based on amplitude thresholds or leading-edge discriminators face an irreconcilable trade-off: lowering the threshold to capture low-energy gamma events inevitably accepts a large background of dark-count-induced false triggers, while raising it suppresses the very signal region of interest. The result is a hard floor in effective detection energy that limits the scientific reach of space calorimeters.

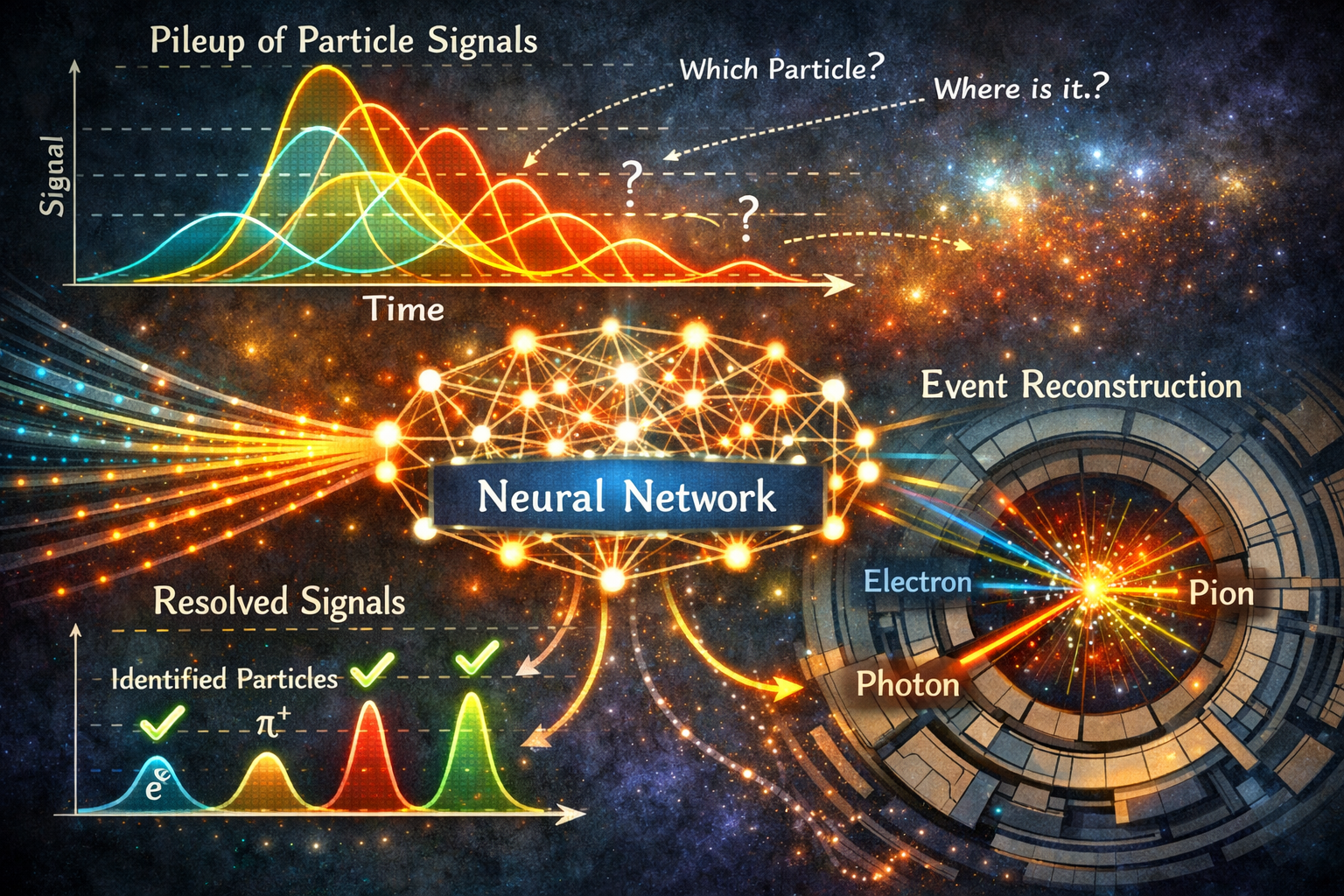

A second challenge compounds the first in high-rate environments: temporal pile-up. When multiple photons arrive at the same scintillator pixel within a time window comparable to the scintillation decay constant, their signals overlap into a composite waveform that appears as a single event with incorrectly reconstructed energy. In orbit, particularly when crossing regions of high particle flux such as the South Atlantic Anomaly, pile-up can severely distort energy spectra, forcing instruments to discard data through veto or prescaling systems — a direct loss of scientific information.

The LEGIMaC project, funded under the Italian PNRR – Missione 4, Componente 2 (Dalla ricerca all’impresa), Spoke 3 of the ICSC National Centre for HPC, Big Data and Quantum Computing, set out to overcome both of these limitations by applying machine learning to the raw waveform produced by the SiPM readout chain, enabling intelligent discrimination at the signal level rather than at the coarse level of integrated amplitude.

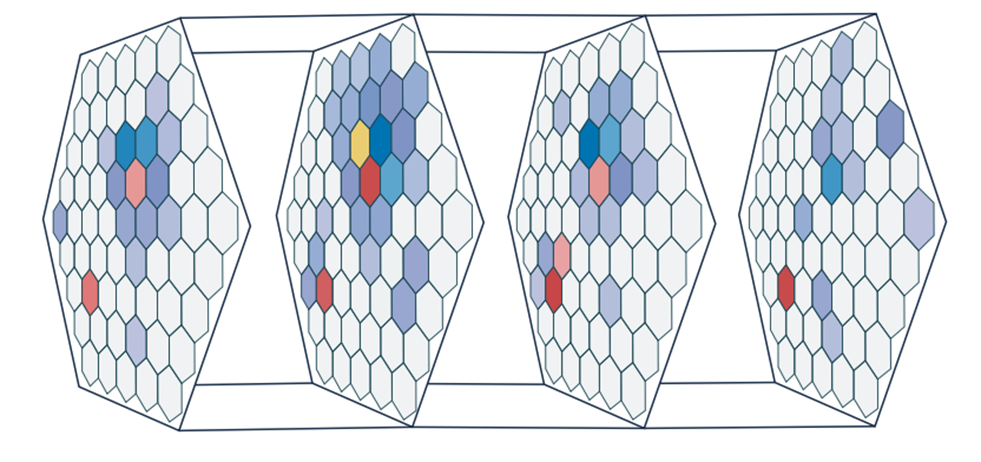

Illustration of the pile-up problem: multiple scintillation events arriving within a short time window produce a superimposed waveform that conventional electronics cannot decompose into individual gamma interactions.

Illustration of the pile-up problem: multiple scintillation events arriving within a short time window produce a superimposed waveform that conventional electronics cannot decompose into individual gamma interactions.

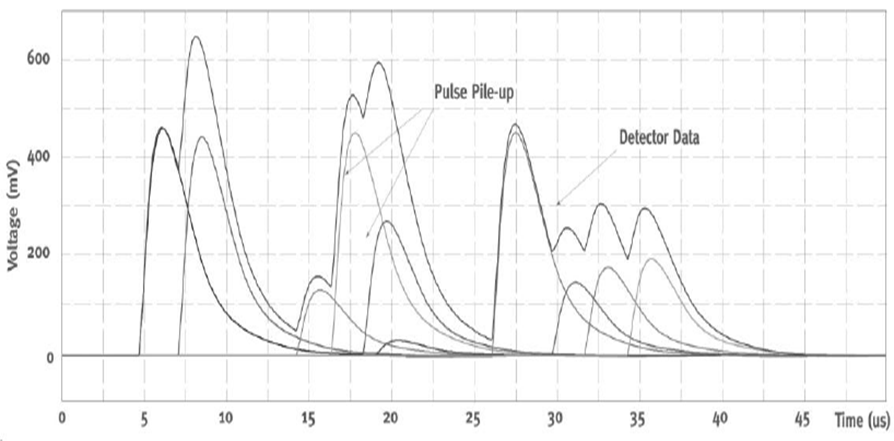

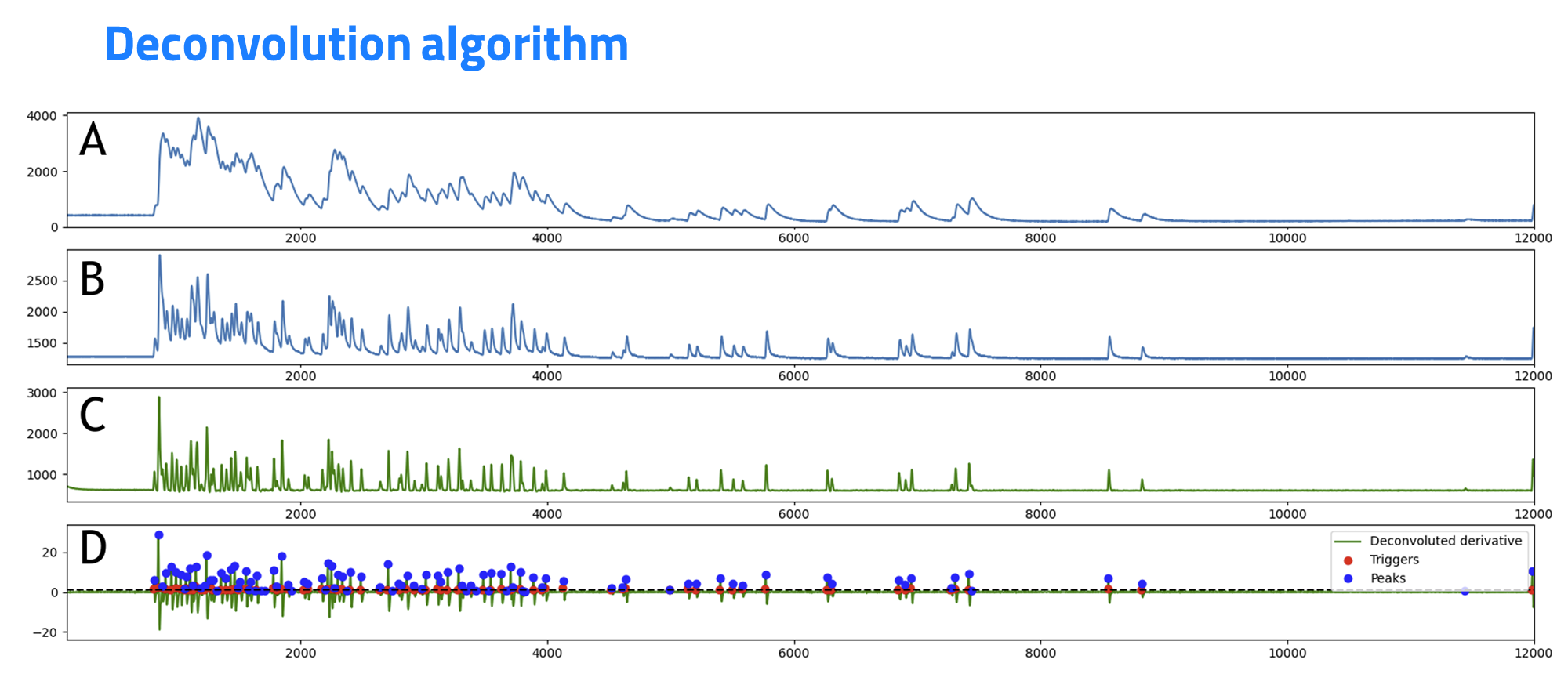

Traditional deconvolution approach applied to a pile-up waveform: while effective at moderate rates, classical methods degrade at high event densities and cannot distinguish dark noise from low-amplitude scintillation signals.

Traditional deconvolution approach applied to a pile-up waveform: while effective at moderate rates, classical methods degrade at high event densities and cannot distinguish dark noise from low-amplitude scintillation signals.

Project Objectives and Scope

LEGIMaC was structured around two closely related algorithmic challenges and their shared hardware implementation. The first challenge — dark noise discrimination — required training a neural network to recognise the subtle morphological difference between a single-photon pulse generated by dark count and a genuine low-energy scintillation signal. Although both produce pulses of comparable amplitude, they differ in their temporal profile: dark counts arise from a single Geiger discharge and exhibit a characteristic fast rise and exponential decay, while scintillation events produce a pulse whose shape reflects the photon emission statistics of the scintillator material, with a longer and material-dependent decay constant. Exploiting this difference systematically and robustly across the full operating temperature range of a space instrument was the core objective of WP2.

The second challenge — pile-up resolution — required a network capable of decomposing a composite waveform into its constituent events, recovering the energy and arrival time of each individual photon interaction. This “pile-up resolver” (WP3) needed to remain effective even at the high event rates experienced during gamma-ray bursts or when crossing regions of anomalous particle flux in orbit.

Both algorithms were then targeted for deployment on an FPGA platform (WP4 and WP5), with a hardware data acquisition system interfaced directly to the FPGA to enable real-time processing. An important parallel activity (WP6) validated the full chain using laboratory radioactive sources, while the final work package (WP7) assessed the suitability of the proposed electronics — including the FPGA family and the front-end digitiser — for actual space qualification.

In the course of the project, the scope was further extended to include Pulse Shape Discrimination (PSD), exploiting the same CNN architecture to distinguish between different particle species (gamma and neutron) using the characteristic waveform signatures of organic scintillators such as EJ276.

Dataset Generation and Signal Modelling

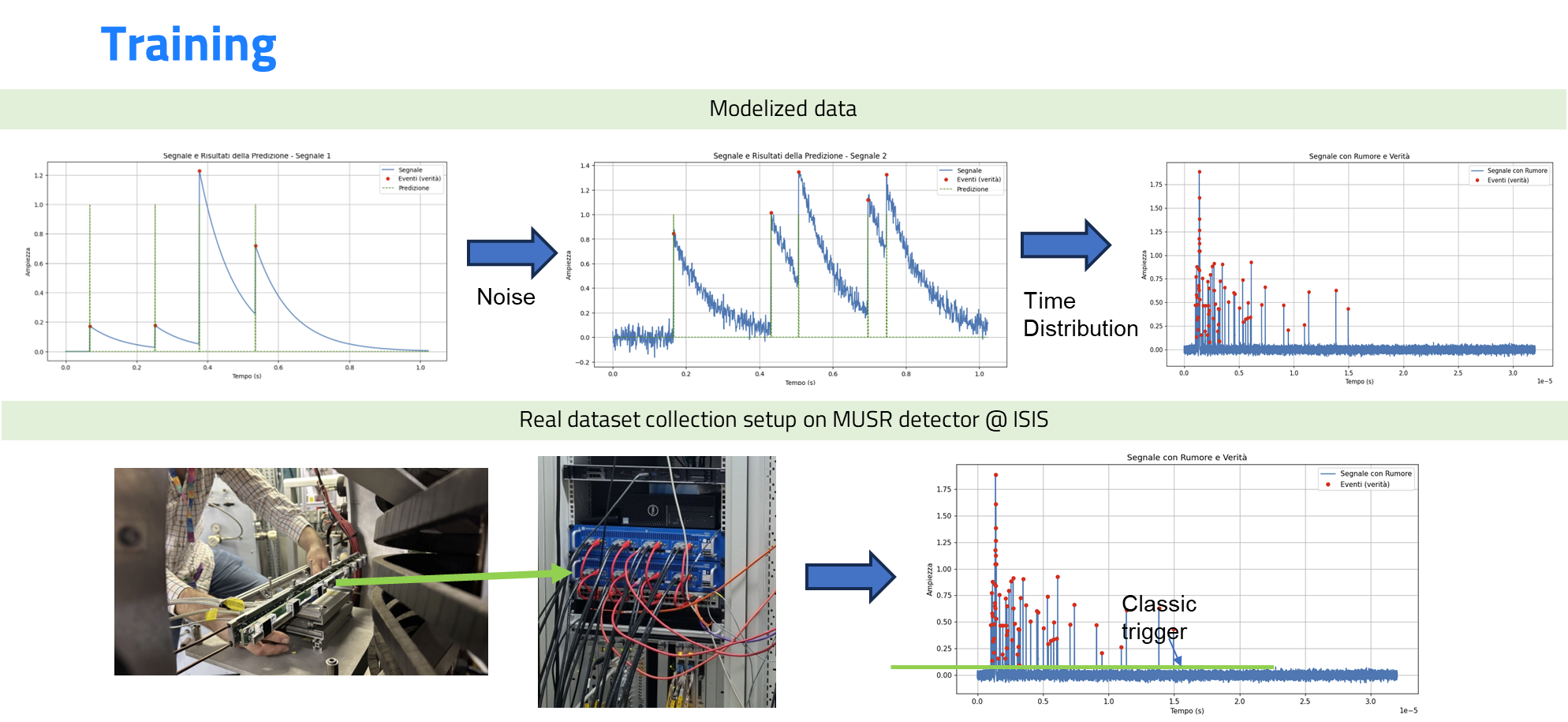

The foundation of any machine learning approach is the quality and realism of the training data. LEGIMaC invested significant effort in building simulation models that faithfully reproduce the response of SiPM-scintillator systems to low-energy gamma events, including all the non-ideal effects that determine the difficulty of the real classification problem.

The simulation framework was developed in Python and incorporates explicit models of dark current, intrinsic electronic noise, temperature-dependent dark count rate, and afterpulsing — the secondary firing of a SiPM cell shortly after a primary discharge, which produces a characteristic double-pulse structure that naive trigger systems can misidentify as a real event. The scintillation response model captures the material-dependent photon emission time distribution for the scintillator types of primary interest to the project (LYSO, GaGG, CsI(Tl)), including the primary and secondary decay constants and the statistical variation in the number of detected photons as a function of deposited energy.

These simulations produced large datasets covering the critical signal-to-noise boundary region, where low-energy scintillation pulses and dark noise pulses overlap in amplitude, with the timing profile providing the only discriminating information accessible without the machine learning analysis. Complementing the simulated data, real experimental data were collected in two important contexts. Laboratory measurements using calibrated radioactive sources provided ground-truth spectra against which algorithm performance could be benchmarked. High-rate data acquired at the SuperMUSR beamline at ISIS (UK) provided conditions analogous to a gamma-ray burst in orbit, with sudden extreme increases in event rate that stress-test the pile-up resolver under conditions far beyond typical laboratory rates.

Training curves for the CNN1D dark noise discriminator: loss and accuracy as a function of epoch, showing rapid convergence and generalisation from simulated to real detector data.

Training curves for the CNN1D dark noise discriminator: loss and accuracy as a function of epoch, showing rapid convergence and generalisation from simulated to real detector data.

High-rate waveform data acquired at the SuperMUSR beamline at ISIS, used to validate the pile-up resolver in burst conditions representative of South Atlantic Anomaly crossings.

High-rate waveform data acquired at the SuperMUSR beamline at ISIS, used to validate the pile-up resolver in burst conditions representative of South Atlantic Anomaly crossings.

Machine Learning Approach

CNN1D for Waveform Classification

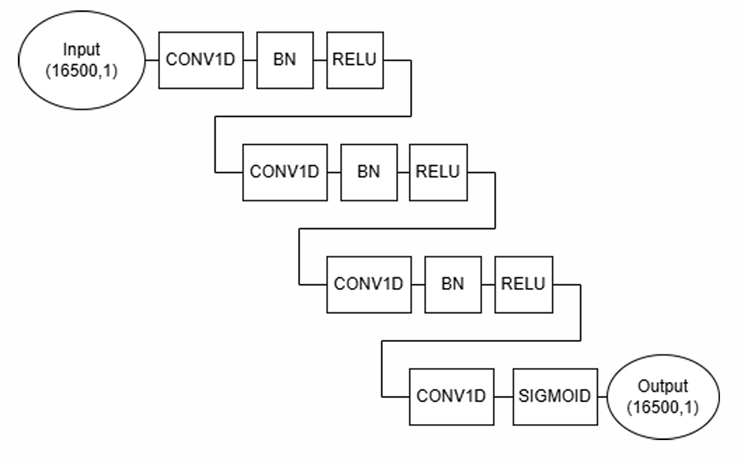

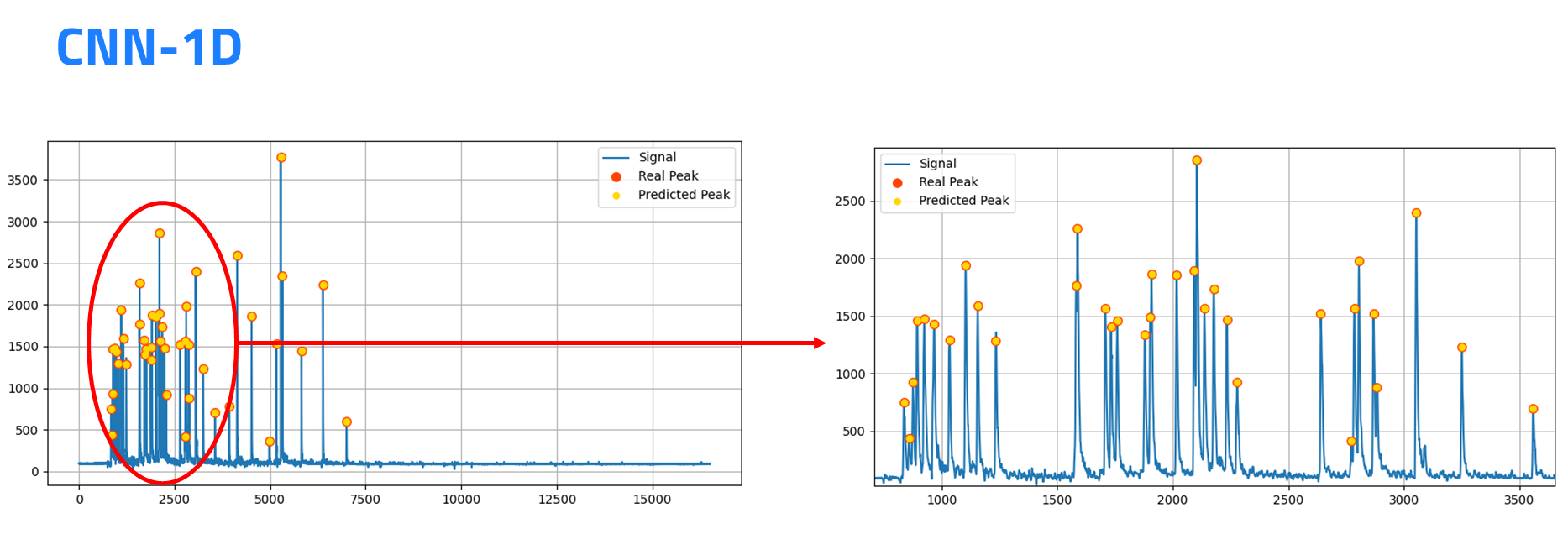

The core algorithmic tool of LEGIMaC is a 1D Convolutional Neural Network (CNN1D) applied directly to the digitised SiPM waveform. Rather than extracting hand-crafted features from the waveform before classification — an approach that inevitably discards information and requires domain-specific engineering — the CNN1D learns to identify relevant temporal patterns from raw ADC samples, making the algorithm inherently adaptable to different scintillator materials and operating conditions simply by retraining on the appropriate dataset.

The network architecture was designed to balance expressiveness with the resource constraints of FPGA deployment. It consists of a sequence of 1D convolutional layers with small kernel sizes to capture the local temporal correlations that distinguish dark noise from scintillation signals, followed by pooling layers to aggregate features across the pulse duration and a fully connected classification head. The training procedure used focal loss to handle the class imbalance present in real data, where dark counts greatly outnumber low-energy gamma events, ensuring that the network did not simply learn to classify everything as background.

The same architecture was repurposed with modified output heads for the pile-up resolver task, where the network must estimate the number of overlapping events and their individual arrival times and amplitudes from the composite waveform. This approach proved more robust than traditional deconvolution methods, particularly in the regime where pile-up events arrive within a few nanoseconds of each other and their waveforms are nearly completely superimposed.

Architecture of the CNN1D network: 1D convolutional filters applied to raw ADC waveform samples progressively extract features that encode the temporal profile differences between dark noise, afterpulse, and scintillation events.

Architecture of the CNN1D network: 1D convolutional filters applied to raw ADC waveform samples progressively extract features that encode the temporal profile differences between dark noise, afterpulse, and scintillation events.

Visualisation of CNN1D activations on representative waveforms: the network learns to respond selectively to the slow-decay component characteristic of scintillation signals, suppressing the sharp single-photon dark count responses.

Visualisation of CNN1D activations on representative waveforms: the network learns to respond selectively to the slow-decay component characteristic of scintillation signals, suppressing the sharp single-photon dark count responses.

CNN1D inference applied to real detector data: scintillation events and dark noise pulses correctly classified from raw waveforms acquired with a LYSO crystal and SiPM readout under laboratory conditions.

CNN1D inference applied to real detector data: scintillation events and dark noise pulses correctly classified from raw waveforms acquired with a LYSO crystal and SiPM readout under laboratory conditions.

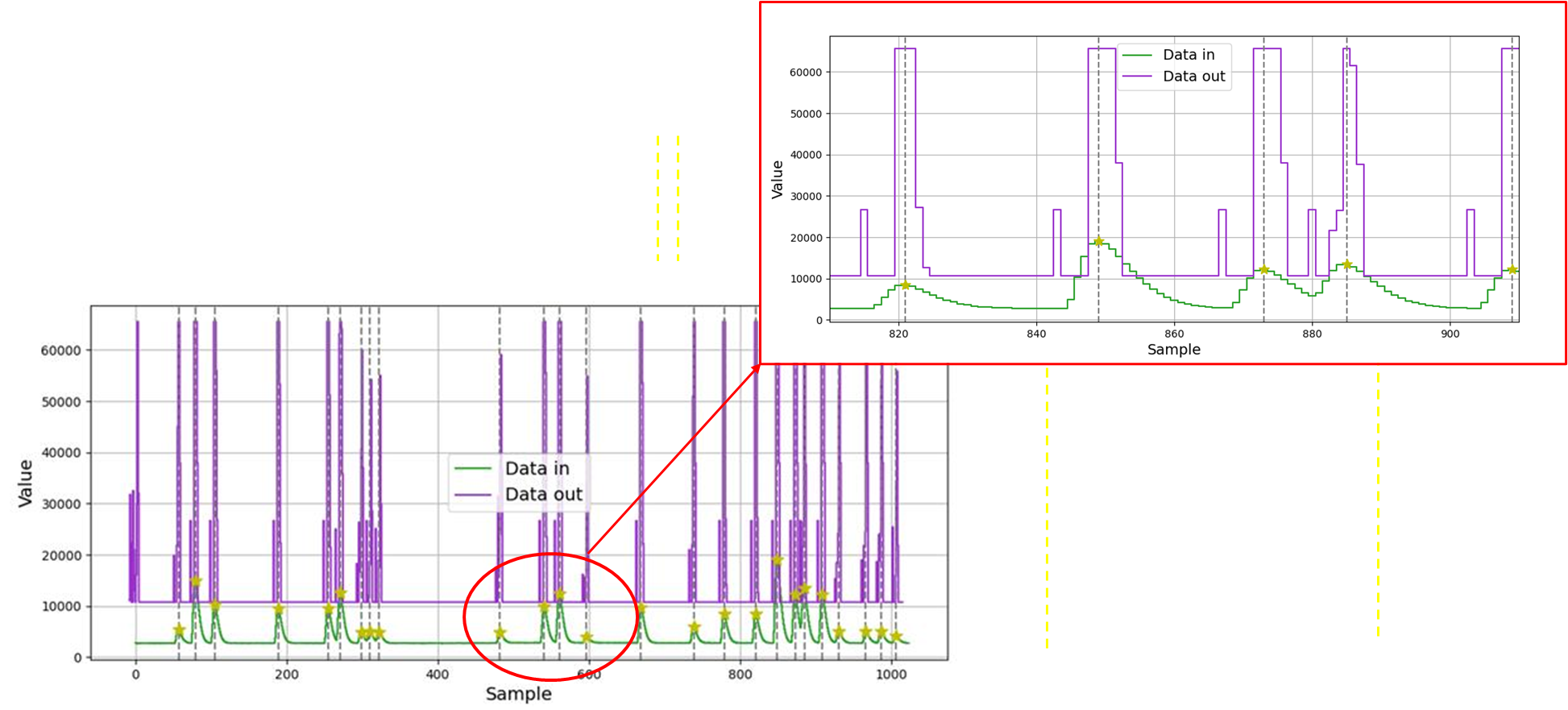

Output of the full data processing pipeline running on FPGA: each waveform is classified in real time, with identified scintillation events tagged for energy reconstruction and dark count pulses rejected.

Output of the full data processing pipeline running on FPGA: each waveform is classified in real time, with identified scintillation events tagged for energy reconstruction and dark count pulses rejected.

Time-scaled view of the FPGA processing output across a continuous data stream: the algorithm maintains stable classification performance through transitions between quiescent and high-rate burst conditions, with no loss of events and no degradation in dark noise rejection.

Time-scaled view of the FPGA processing output across a continuous data stream: the algorithm maintains stable classification performance through transitions between quiescent and high-rate burst conditions, with no loss of events and no degradation in dark noise rejection.

Hardware Implementation

Real-Time Acquisition Chain

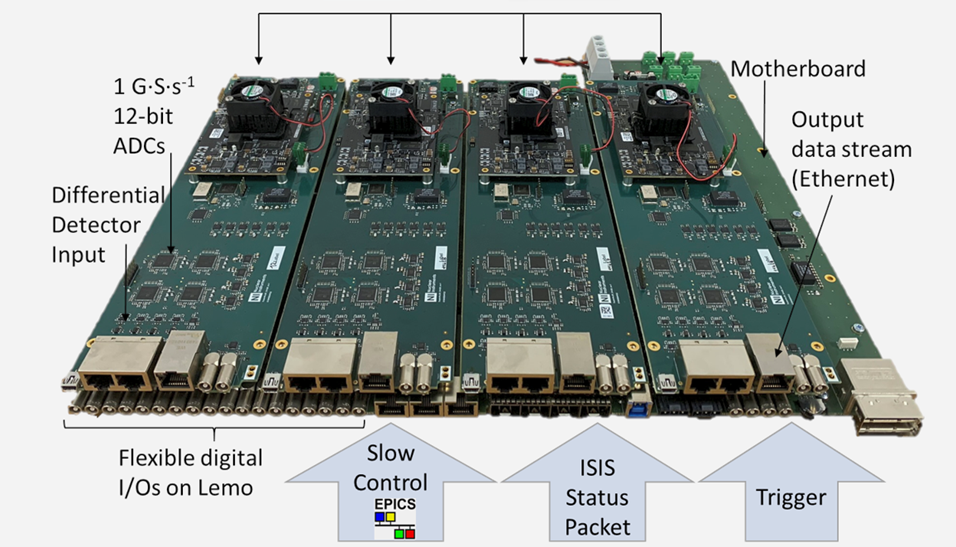

The hardware platform for LEGIMaC centres on the Nuclear Instruments DAQ-121, a high-speed 32-channel digitiser that samples SiPM waveforms at 1 GSps. The digitiser provides the raw ADC stream from which all subsequent processing is derived, and its FPGA fabric hosts the inference engine that runs the CNN1D in real time as data arrives from the detector.

The Nuclear Instruments DAQ-121: a 32-channel, 1 GSps digitiser whose on-board FPGA hosts the CNN1D inference engine for real-time waveform classification.

The Nuclear Instruments DAQ-121: a 32-channel, 1 GSps digitiser whose on-board FPGA hosts the CNN1D inference engine for real-time waveform classification.

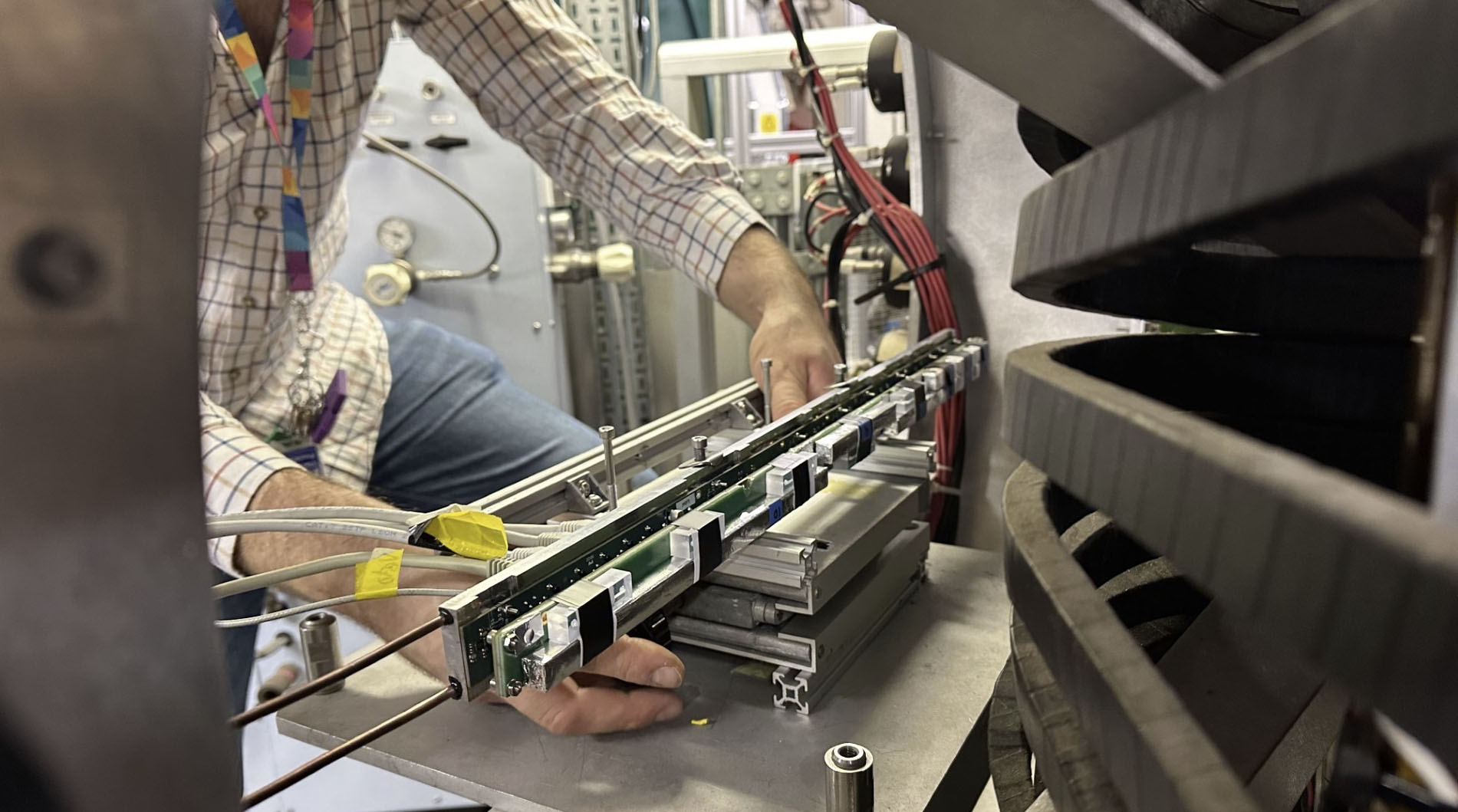

Internal view of the DAQ-121, showing the high-speed ADC front-end and the Zynq Ultrascale+ SoC that executes both the data acquisition firmware and the HLS4ML-generated neural network inference.

Internal view of the DAQ-121, showing the high-speed ADC front-end and the Zynq Ultrascale+ SoC that executes both the data acquisition firmware and the HLS4ML-generated neural network inference.

A streaming data infrastructure based on ZeroMQ and FlatBuffers was developed to transfer waveform data from the digitiser to the neural network with minimal latency and maximum architectural flexibility. This layer allowed the same algorithm to be benchmarked across heterogeneous platforms — CPU, GPU, NPU, and FPGA — with identical input data, enabling direct and fair performance comparisons.

An important firmware contribution was the development of a VHDL-based advanced trigger capable of detecting sudden bursts of events in real time and opening synchronised acquisition windows for a programmable duration. This mechanism allows the system to respond autonomously to transient high-rate conditions, such as the onset of a gamma-ray burst, without requiring intervention from the onboard computer, and without discarding data through the blunt instrument of a rate-dependent veto.

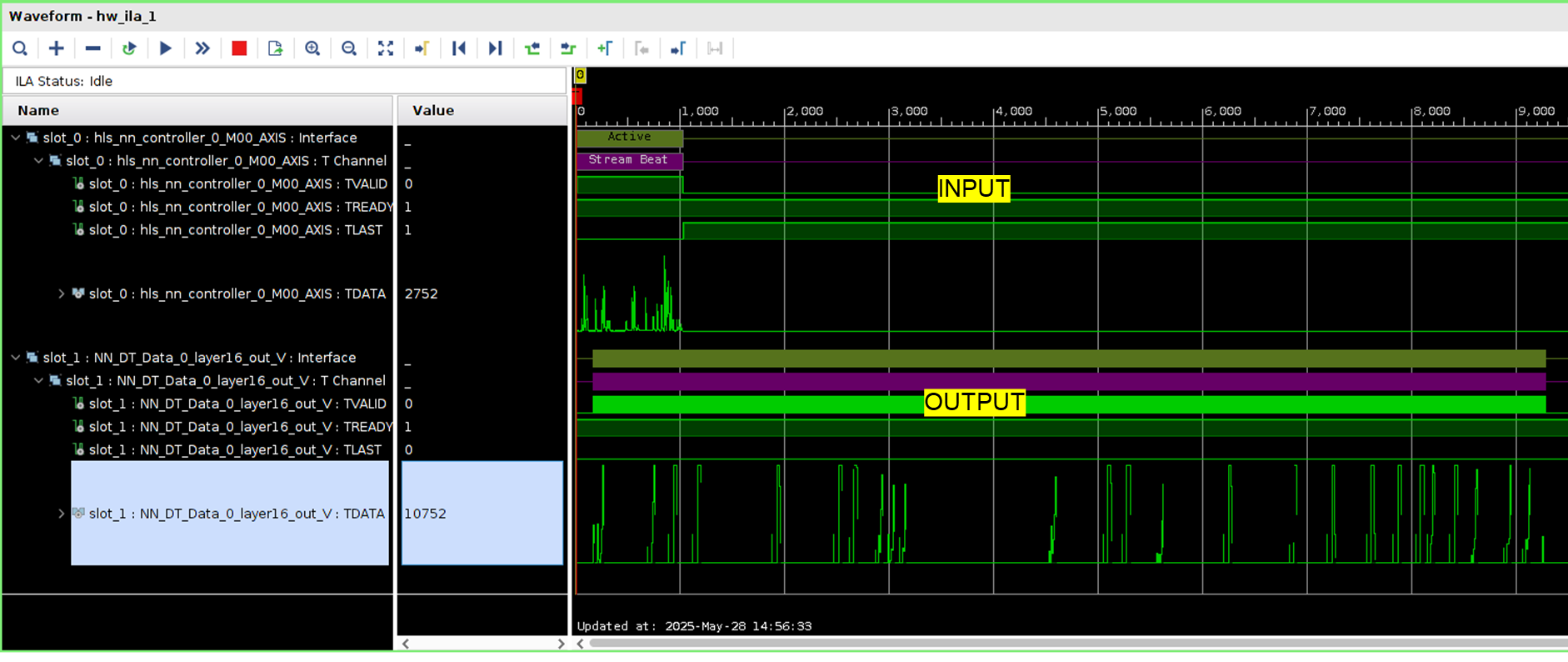

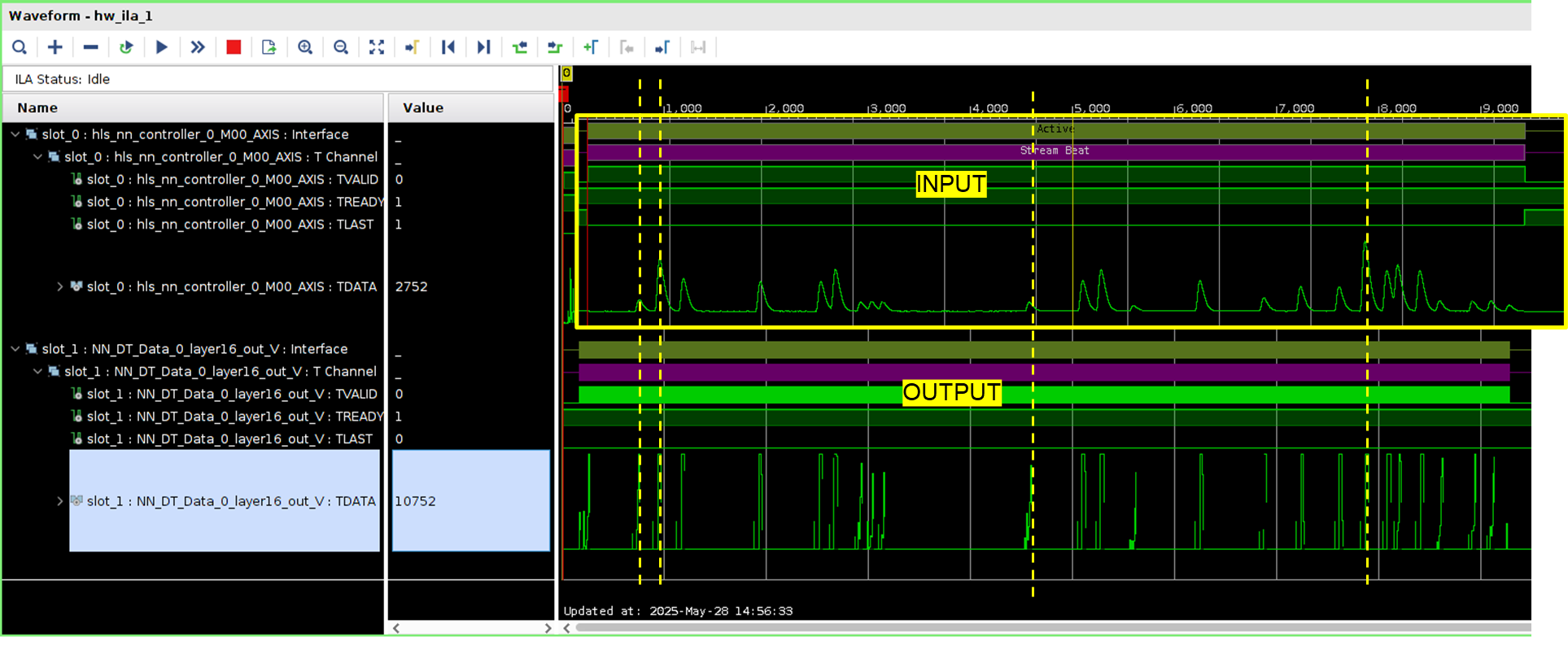

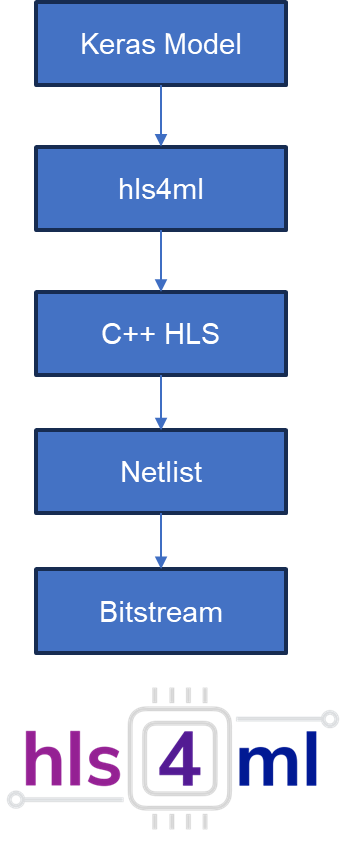

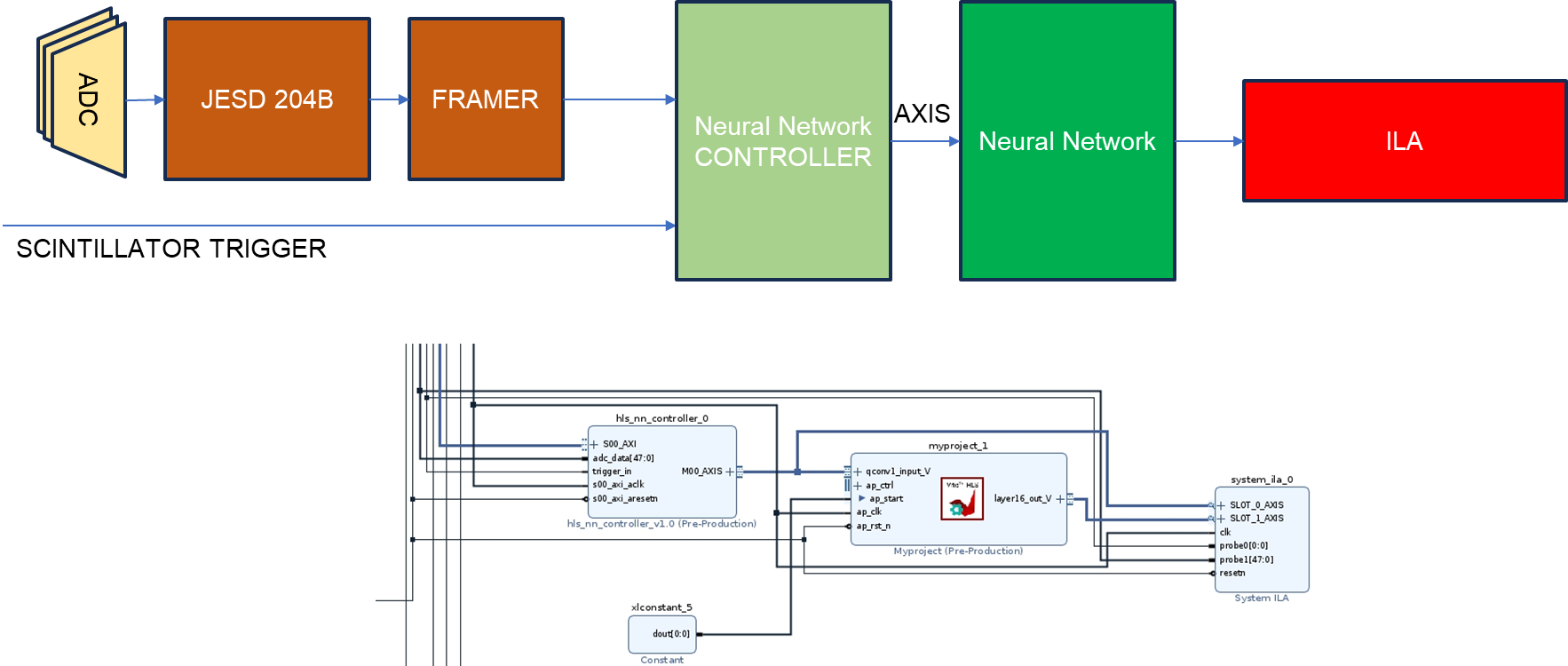

FPGA Deployment via HLS4ML

The path to space-compatible deployment follows the same HLS4ML approach established in the SPARTAN project. The trained CNN1D model is translated from its Python/Keras description into synthesisable VHDL/Verilog by the HLS4ML framework, producing a firmware core that can be integrated directly into the acquisition FPGA without any intermediate processing steps. This eliminates the latency and power overhead of transferring data to an external processor and back, and keeps the complete inference chain within a single chip whose radiation tolerance can be assessed and managed.

The HLS4ML workflow: from a trained Keras model through high-level synthesis to a synthesised FPGA firmware core, enabling deployment of the CNN1D on the Zynq Ultrascale+ without manual HDL development.

The HLS4ML workflow: from a trained Keras model through high-level synthesis to a synthesised FPGA firmware core, enabling deployment of the CNN1D on the Zynq Ultrascale+ without manual HDL development.

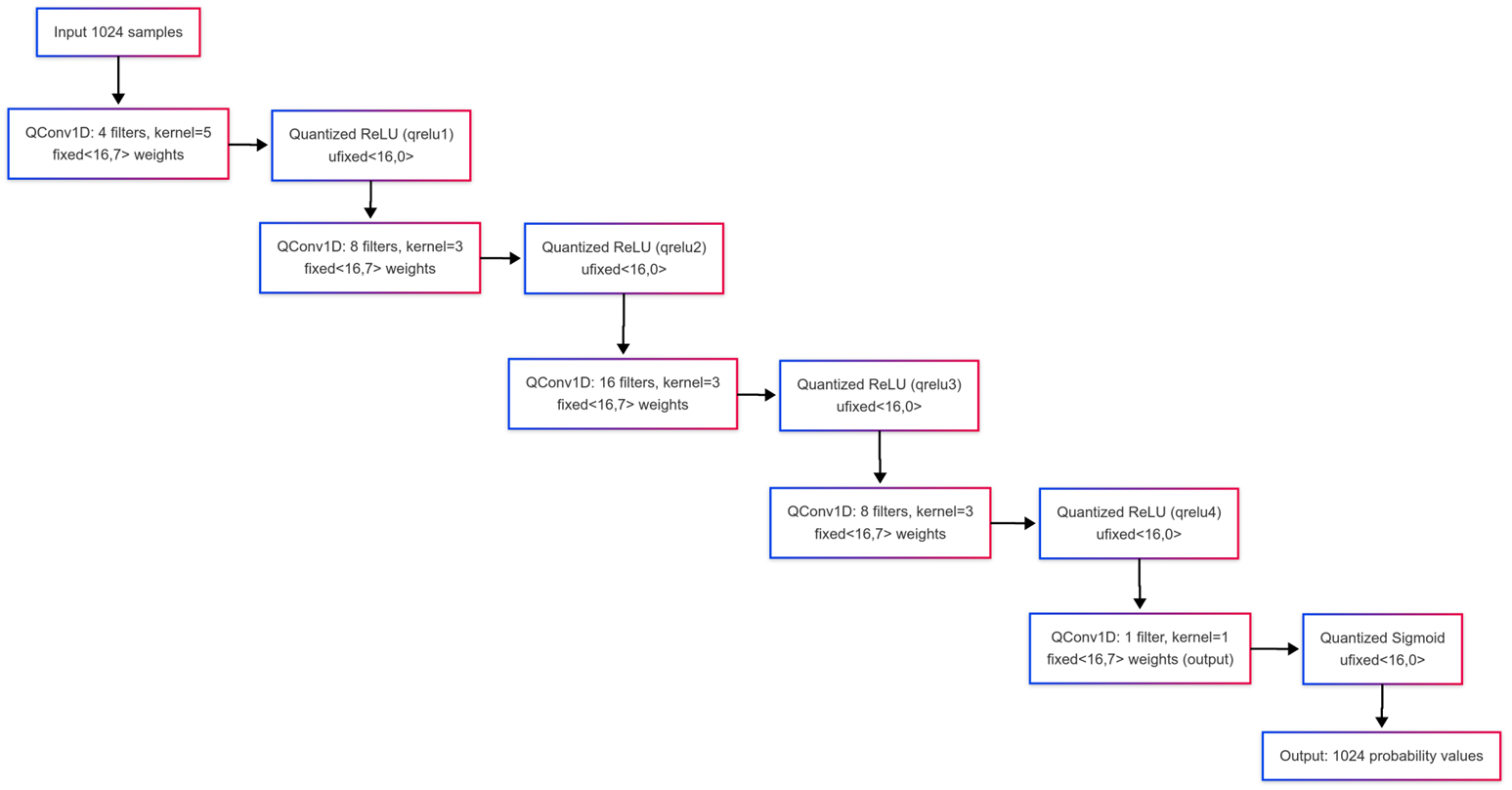

Block diagram of the LEGIMaC CNN1D core as synthesised by HLS4ML and integrated into the DAQ-121 firmware: the inference engine receives digitised waveform samples and produces event classifications within a fixed and predictable latency.

Block diagram of the LEGIMaC CNN1D core as synthesised by HLS4ML and integrated into the DAQ-121 firmware: the inference engine receives digitised waveform samples and produces event classifications within a fixed and predictable latency.

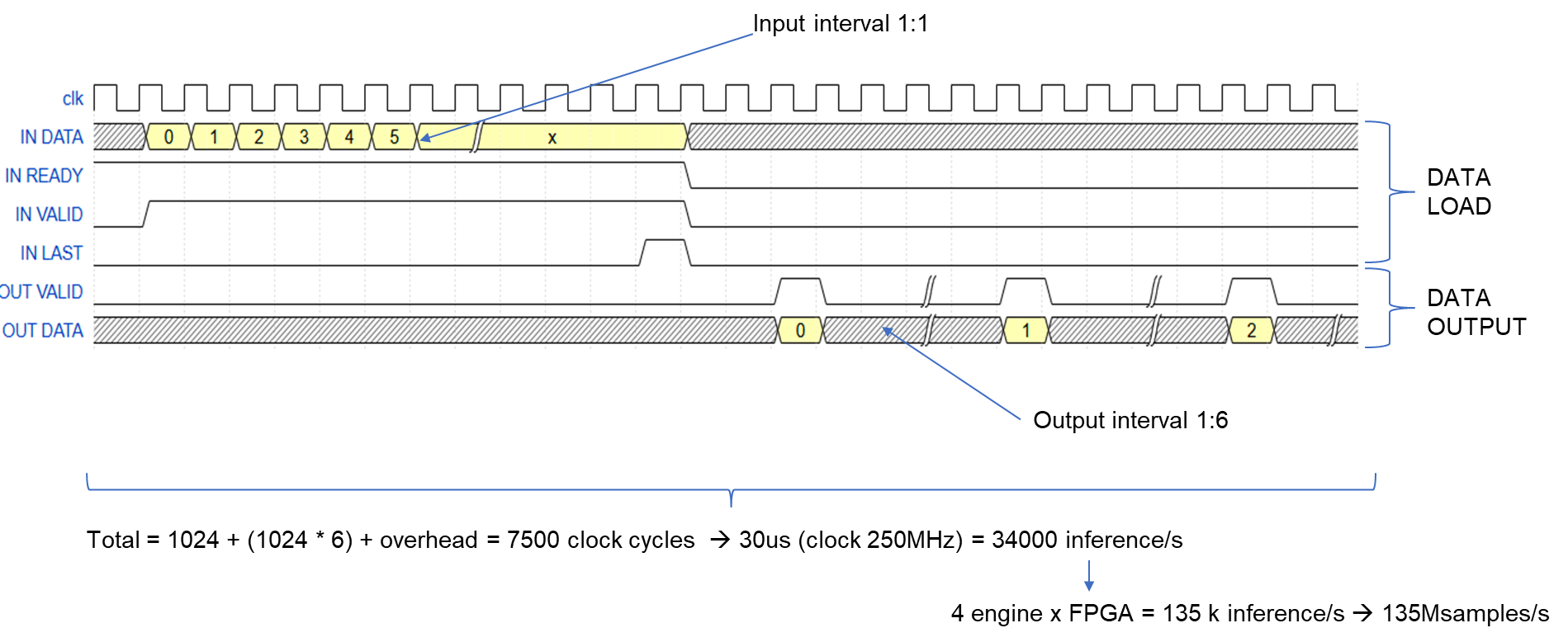

Timing diagram of the HLS4ML inference core: the pipeline processes one new waveform sample per clock cycle, producing a classification output after a fixed initiation interval with no dead time between consecutive events.

Timing diagram of the HLS4ML inference core: the pipeline processes one new waveform sample per clock cycle, producing a classification output after a fixed initiation interval with no dead time between consecutive events.

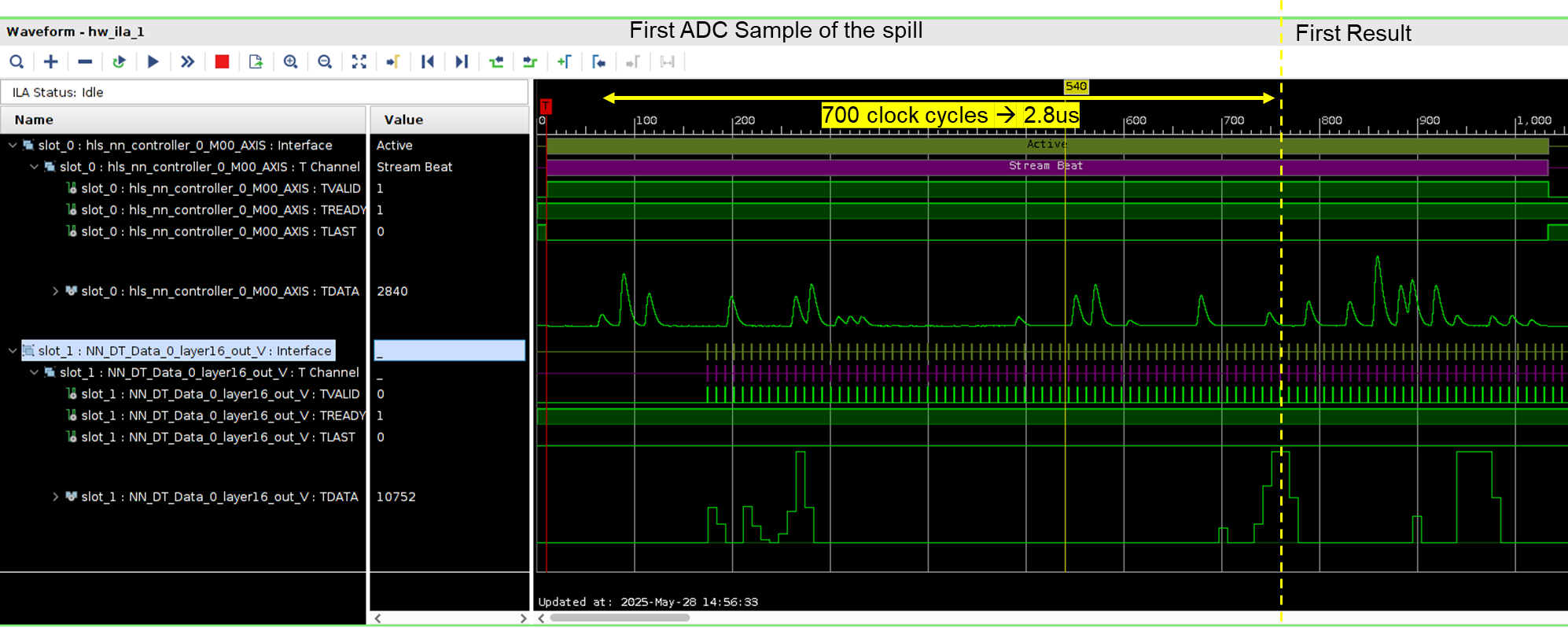

End-to-end inference latency measured on the FPGA: the CNN1D classifier produces a result within a few microseconds of the waveform acquisition, well within the timing requirements of any realistic trigger chain.

End-to-end inference latency measured on the FPGA: the CNN1D classifier produces a result within a few microseconds of the waveform acquisition, well within the timing requirements of any realistic trigger chain.

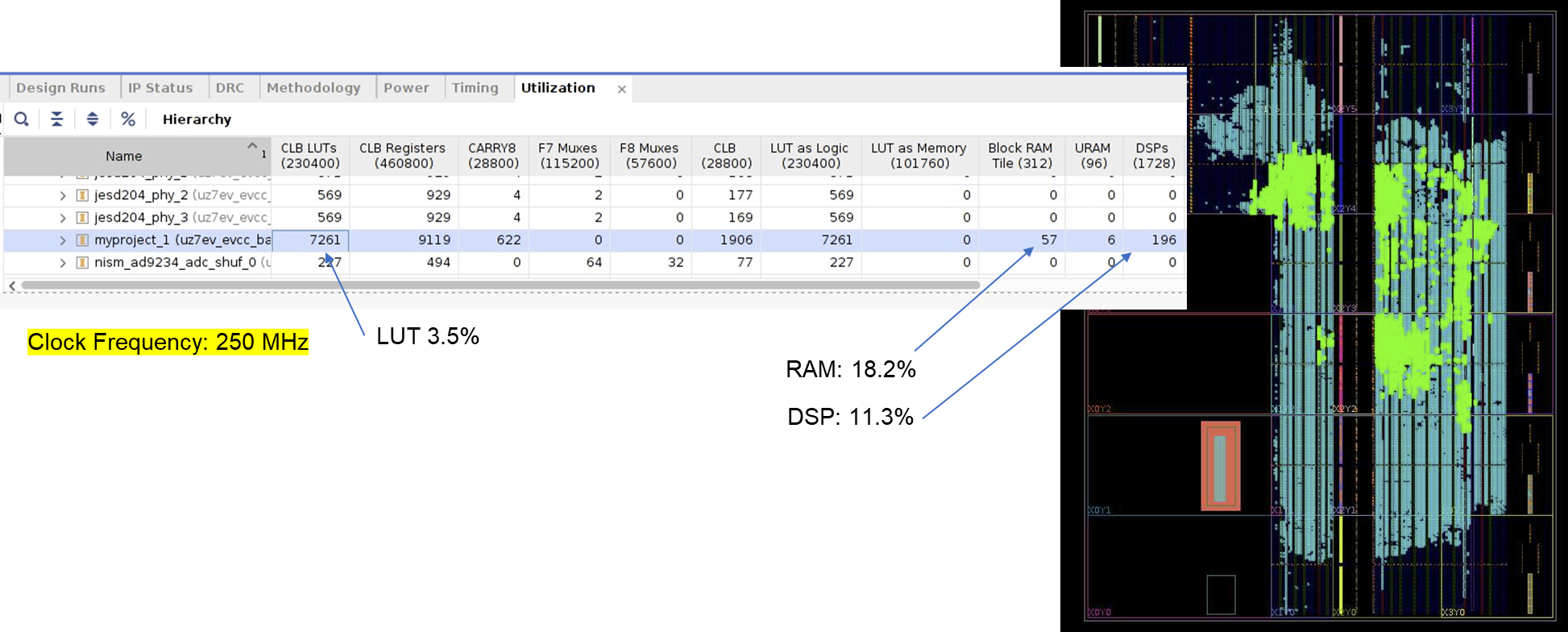

FPGA resource utilisation of the HLS4ML-synthesised CNN1D: the network occupies a modest fraction of the available logic resources on the Zynq Ultrascale+, leaving ample headroom for additional algorithm instances or for larger models.

FPGA resource utilisation of the HLS4ML-synthesised CNN1D: the network occupies a modest fraction of the available logic resources on the Zynq Ultrascale+, leaving ample headroom for additional algorithm instances or for larger models.

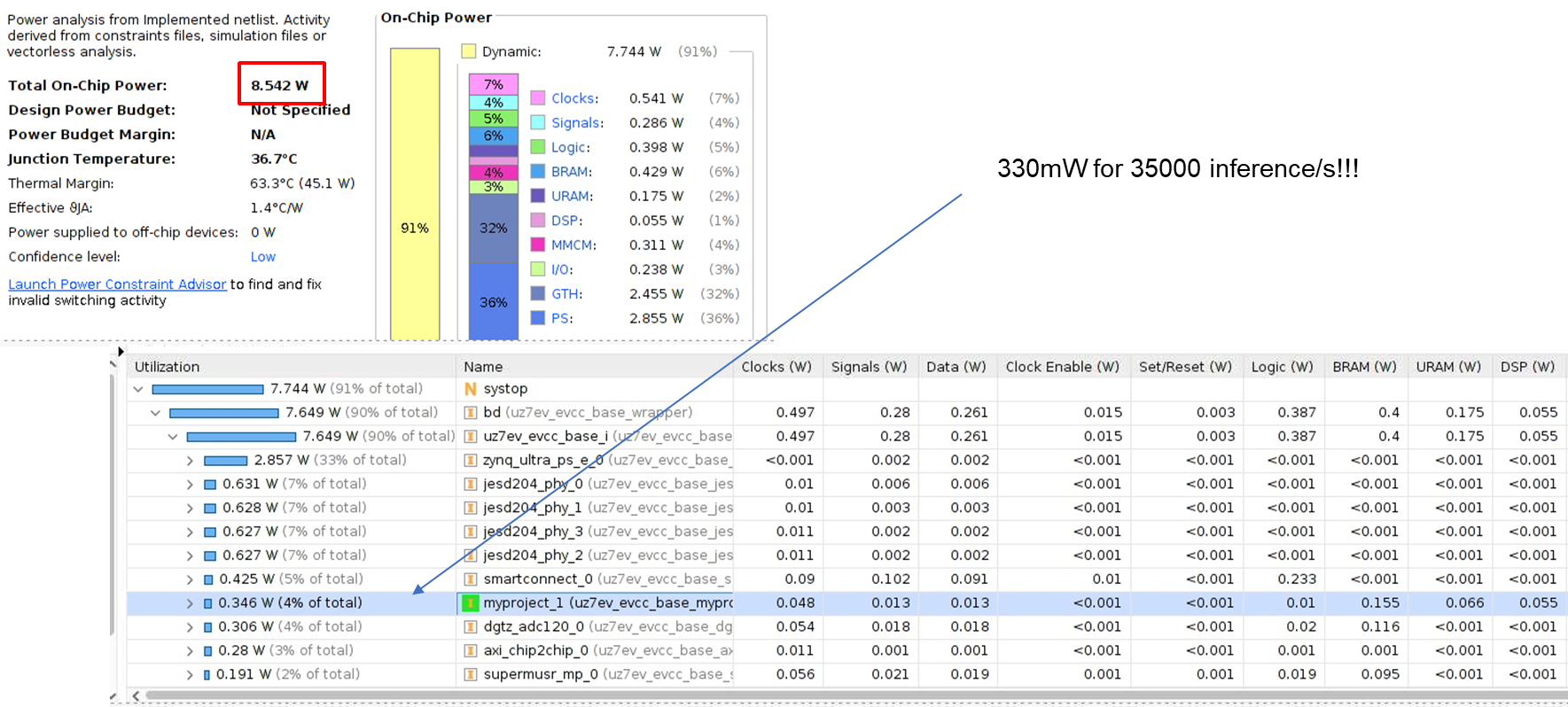

Static and dynamic power estimation for the CNN1D inference core on FPGA. The incremental power budget required by the neural network is a few hundred milliwatts, fully compatible with the power envelope of space instrument electronics.

Static and dynamic power estimation for the CNN1D inference core on FPGA. The incremental power budget required by the neural network is a few hundred milliwatts, fully compatible with the power envelope of space instrument electronics.

Complete implementation overview of the LEGIMaC system: the DAQ-121 digitiser feeds raw SiPM waveforms into the Zynq Ultrascale+ FPGA, where the HLS4ML-generated CNN1D classifier and pile-up resolver run in parallel, producing tagged event streams for energy reconstruction and scientific analysis.

Complete implementation overview of the LEGIMaC system: the DAQ-121 digitiser feeds raw SiPM waveforms into the Zynq Ultrascale+ FPGA, where the HLS4ML-generated CNN1D classifier and pile-up resolver run in parallel, producing tagged event streams for energy reconstruction and scientific analysis.

HLS4ML vs. Xilinx DPU: A Systematic Comparison

The project conducted a careful comparison between the HLS4ML synthesis approach and the Xilinx DPU (Deep Processing Unit) soft-core as alternative deployment strategies for the CNN1D on FPGA. The DPU offers broader model compatibility and the ability to execute different networks without resynthesising the FPGA bitstream, which is attractive during the development phase when model architectures are still evolving. However, it requires significantly more logic resources and consumes more power than the HLS4ML-generated core, and its tightly coupled dependency on the Xilinx tool chain introduces integration complexity that limits its suitability for space-grade implementations.

For the compact CNN1D architecture developed in LEGIMaC, HLS4ML proved definitively superior in terms of efficiency: it produces a fully pipelined datapath tailored to the exact network topology, with no overhead from unused computational paths or from the general-purpose scheduling logic of the DPU. The resulting core occupies less than 10% of the available logic on the Zynq Ultrascale+ and adds a power increment of the order of a few hundred milliwatts — figures that remain compatible with the resource budgets of realistic space electronics platforms.

Pulse Shape Discrimination

Beyond the original project objectives, the CNN1D architecture demonstrated a natural extension to Pulse Shape Discrimination (PSD): the distinction between different particle species based on the characteristic waveform produced in the scintillator. Organic scintillators such as EJ276 exhibit different emission time constants for electrons (produced by gamma interactions) and for protons or heavier recoils (produced by neutron interactions), and this difference is encoded in the tail of the digitised waveform. Classical PSD methods rely on the charge comparison technique, integrating the signal over a short and a long time gate and comparing the ratio. This technique is simple and fast, but it is sensitive to the integration gate choice and degrades at low energies where statistical fluctuations in photon counts are large.

The CNN1D classifier trained on PSD data demonstrated the ability to extract the discriminating information from the full waveform shape rather than from a simple ratio, achieving reliable gamma–neutron separation down to energies where the charge comparison method begins to fail. This result extends the applicability of the LEGIMaC approach beyond calorimetric imaging into the broader domain of particle identification, with direct relevance to both space-based astroparticle instruments and ground-based neutron detectors.

Results and Assessment for Space Applications

The LEGIMaC project delivered a complete and experimentally validated chain from signal acquisition to real-time classification on FPGA, demonstrating the scientific and technological viability of machine learning as a replacement for conventional trigger electronics in SiPM-based calorimeters.

The dark noise discriminator was validated on both simulated and real laboratory data, demonstrating the ability to push the effective detection threshold significantly below the level accessible to leading-edge trigger systems. By correctly identifying and rejecting dark count pulses while preserving low-amplitude scintillation signals, the algorithm recovers events in the energy region that conventional instruments sacrifice entirely to background suppression.

The pile-up resolver operated effectively at rates representative of the most demanding space conditions, including burst-like scenarios acquired at ISIS. The algorithm successfully decomposed overlapping waveforms into their individual components, recovering energy and timing information that would otherwise be lost or incorrectly assigned — a direct improvement in the scientific efficiency of the instrument, equivalent to extending observation time without any hardware change.

The FPGA implementation via HLS4ML on the Zynq Ultrascale+ demonstrated inference latency of a few microseconds, power consumption of a few hundred milliwatts for the neural network core, and resource utilisation well within the bounds of practical space electronics. The Zynq Ultrascale+ family’s availability in Military and Space Grade variants confirms that this architecture can be directly targeted for flight hardware, without requiring architectural changes to the algorithm or the firmware.

Impact and Future Directions

For the scientific community, LEGIMaC demonstrates that the low-energy frontier of gamma-ray astrophysics is not primarily a hardware problem but an analysis problem — one that machine learning is uniquely suited to address. Instruments already in development or operation, whose physical detectors are capable of producing useful signal at energies below their current software thresholds, could potentially benefit from this approach through a firmware update alone, without any change to the flight hardware.

For Nuclear Instruments, LEGIMaC has consolidated a set of core competencies that apply directly to a wide range of products and projects. The HLS4ML deployment workflow, the waveform-level CNN1D architecture, and the streaming data infrastructure developed for the project are all immediately reusable components for future instrument development, in contexts ranging from gamma-ray telescope readout to TOF-PET medical scanner front-ends, where the same challenge of separating real events from SiPM dark noise at low energies is encountered.

The extension to Pulse Shape Discrimination broadens the commercial scope further, into neutron/gamma discriminating instruments for nuclear security, environmental monitoring, and safeguards applications — areas where the ability to classify particle species at low energies in real time on compact, low-power hardware represents a substantial performance improvement over existing commercial solutions.